So say I have a theoretical scene on a sunny day that includes some rocks of various values on a field of fresh snow. I use my blinkies to set my highest possible exposure that doesn't blow the brightest snow. In theory if there were 16 stops I would see what on the computer? For example would a shadow directly under a rock have some detail?

That depends in part on the contrast ratio/dynamic range of your monitor and that includes what it can accept in terms of bit depth of image files. If like most monitors you're limited to 8 bit image depth (255,255,255 whites) then the extra DR in the image won't be directly visible. But that extra bit depth at capture and in processing (e.g. via 14 bit 'hi-bit' processing) can allow you pull up shadows without excessive noise or quantization artifacts so that those shadow areas fit into your monitor (or print) output capabilities.

As you posted in your first post the output medium has a dynamic range limit itself and if your monitor doesn't have as many stops of dynamic range you won't see deeply into shadows even if the image being processed was shot and captured with sufficient DR and you processed in a hi-bit depth mode. That's even more dramatic in prints where many print processes like commercial offset or ink jet printing may be limited to around 5 stops of dynamic range regardless of the captured scene, the camera's capabilities and the processing chain. One of the tricks is to process images to fit within the DR of the output medium via tools like Curves, Highlight and Shadow recovery, dodging, burning, etc.

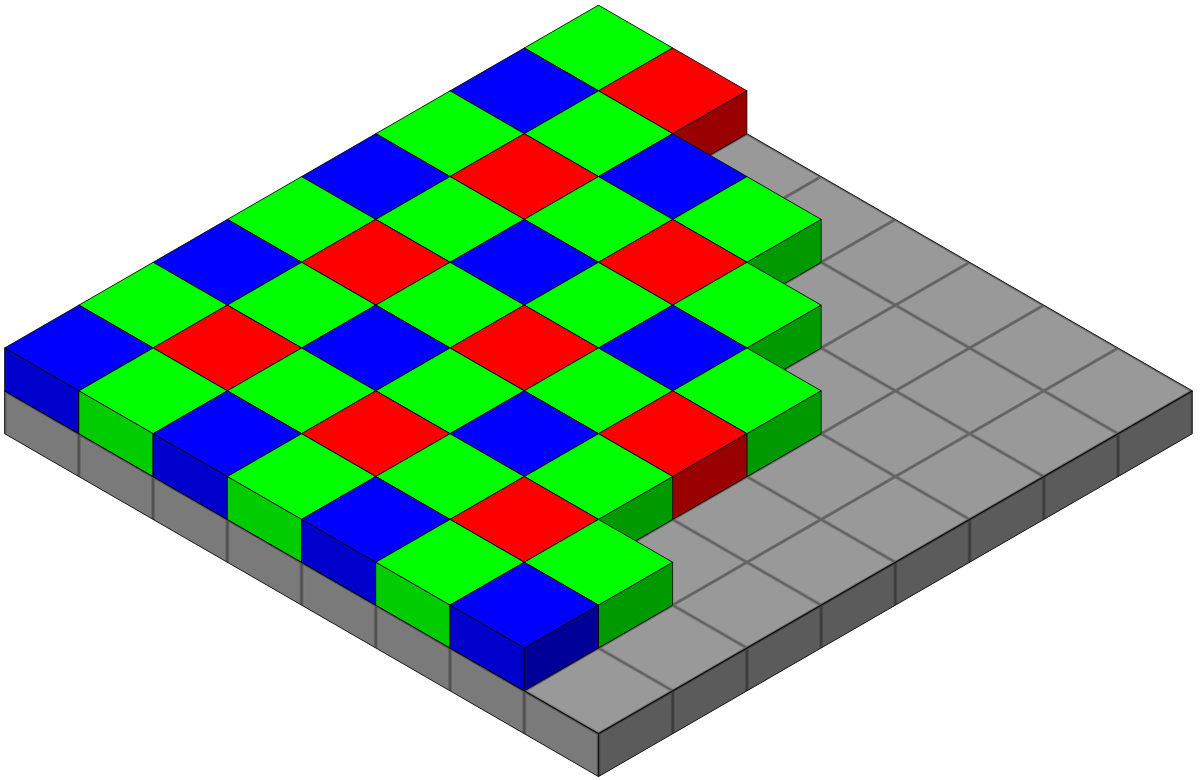

FWIW, I look at it as a series of steps each with their own DR characteristics:

- The scene itself and the lighting create the initial DR demands. A relatively low contrast scene in soft light might only have a few stops of DR but a high contrast scene in direct light with shadows might push things very hard. The classic example is a black wool tux next to a white satin wedding dress photographed under harsh mid day lighting. A very common situation for outdoor wedding photographers that can push DR requirements very hard if you want detail in those blacks and whites under hard lighting that casts shadows. If you can't fill with flash or bounce cards that kind of scene can push DR requirements to or beyond the limit of many cameras.

- The camera's DR at the shooting ISO. This is the one we tend to focus on and the place we can buy our way to more latitude as technology improves but it's one step in the chain. Though even in great light for a moderate contrast scene more DR never hurts as it lets us make modest exposure mistakes without penalty when needed. But in high DR scenes/lighting this can be very important.

- The processing as in processing 8 bit images or doing RAW conversion to hi-bit mode on import. If you keep everything in 8 bit mode then harsh scenes like the example above may be clipped or may end up showing quantization artifacts (banding, posterization) after processing to try to recover detail.

- Output medium and its DR capabilities which can range to just a handful of useful stops of DR when printing to more in a high contrast monitor but none of this is infinite so it can place restrictions on what's possible in the displayed image from a DR perspective. And like all web sharing situations we can't control the DR of remote viewer's monitors so we process to an 8 bit sRGB image that will look good to most even if it doesn't reveal huge DR or color gamut capabilities of the rest of our image processing chain.